CAUTION

This entire material is written in and displayed by couchapps, which were developed inside other couchapps. Hereby granted, that no single line of text or supporting code was written outside a browser tab.

Assume this article an experiment, since all technologies used here are off mainstream.

Try it only on your own risk, CouchDB is highly addictive 😜

Couchapps, revisited

Lesorub.pro is the website project of the russian regional lumberjack championship. Main project objectives: offline-ready backend, integrated championship-related apps and flexibility.

All CMS components are couchapps — backend, frontend renderer and dev environment. It means the server side of the project consists only of the two main parts: CouchDB 1.6.1 and nginx in front. They are running under Ubuntu and hosted at 2×1.7GHz, 2Gb RAM with SSD.

CouchDB 1.6.1 is patched to have JS rewrite functionality, newly introduced in CouchDB 2.0.

Whу CouchDB

There is only one OSS DB, that can transparently sync with in-browser counterpart, which is also OSS. The DB is CouchDB and browser counterpart is PouchDB.

CouchDB, written in Erlang, is extremely, ridiculously reliable. It just never fails, for years, under load and without any significant maintenance.

* Actually, functions are not purely stateless, there is a trick for memoizing function‘s results inside JS VM runtime sandbox.

Even CouchDB query server‘s support of JS functions does not affect reliability. JS code parts restricted to be only pure stateless* synchronous functions. They run in 64Mb sandboxes allowed to fail/restart under Erlang code supervisory.

Unsurprisingly, transferring JSONs between JS VMs and Erlang code costs a lot. CouchDB has relatively low CRUD speed, but in several cases other benefits overweight the cons.

What is couchapp

This technology is, well, not so modern. During 2009–2011, when CouchDB was actively developed, there was a faith, that CouchDB per se could be sufficient for web applications. And it was nearly true: REST API, file attachments with absolute URLs, map/reduce, special page builder functions and doc updaters etc.

But the year of 2011 was the year node.js star rose, and the couchapp concept seemed uncompetitive. This was true for me, mainly because it provided no easy way to build custom request routers. Smart flexible router is an important part of any modern JS app, express for example has 7M downloads monthly.

Ok, in 2016 JS rewrites finally landed in CouchDB 2.0 — so we have routers.

Rewrite rules now are not only arrays of mappings, which were bit cryptic and limited. Starting with version 2.0 rewrites can also be functions. They can process incoming requests, modify params, headers, method and even body. Modified request goes into CouchDB.

Since a rewrite function now sees the entire request and user name/roles, it can cover the widest variety of backend-specific cases and implement rich custom APIs for ajax requests.

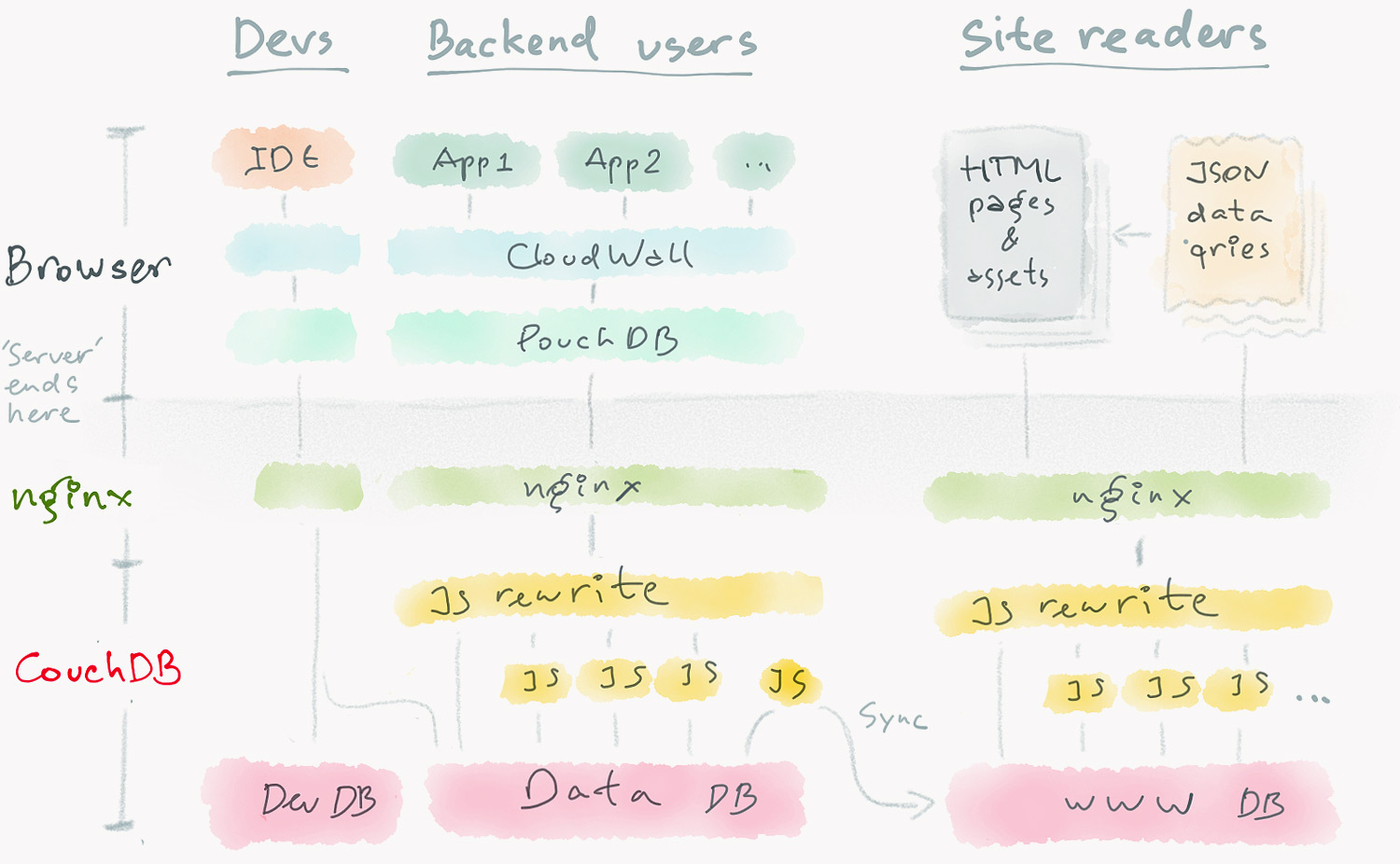

Couchapp at lesorub.pro consists of Dev, Backend and Public parts, all living at different (sub)domains. The entire picture looks (and, as for now, works) roughly like this:

No node.js at all. Nginx only gzips text-like CouchDB replies, and adds Cache-control in several cases.

Unless we need authorized users at a public part or high capacity, it all fits nicely for data-driven designs.

Offline-readуness

I have one annoying question unanswered about offline-ready apps. Is there any lib producing progressive jpegs in browser?

I have nice resampling, folding, or any other image mapping at client, but I still need Photoshop (or jpegtran serverside) to make progressive jpegs.

The narrow meaning of ‘offline-ready app’ is a web app immune to offline. It means, an app tab once opened must interact properly and not lose user data regardless of the connection state. An app accidentally closed must not in any way destroy data not yet sent.

It‘s bit challenging, mainly because of binary resources complexities. You must manage Object URLs to enable some features to work offline, good example is images. Object URLs are not so easy to use: they require manual freeing, they are session-dependent and so on.

Also you must manage ajax requests. The code of public pages sometimes requests DB and assets over http[s], but the same code running inside backend must fetch data from a local in-browser DB copy, not from the web.

And, surely, an offline-ready platform must sync data immediately if a user is online, or in cumulative way when the user goes online. Happily, achieving this objective is easy, since CouchDB + PouchDB pair is used. PouchDB persistent in-browser storage acts like a synced filesystem for a web app platform.

Client app platform and IDE

Latest dev version of CloudWall:

https://cloudwall.me/_demo

CloudWall (CW) platform was created for cases similar to this project. It is used as an IDE for app development and, in reduced version, as a browser runtime for backend apps.

CW runs jquerymy applications. They are JSONs, stored in DB and fit CouchDB format especially well. CW has built-in IDE for developing and testing jquerymy apps in browser.

Also CW has built-in app for developing CouchDB design documents, Ddoc Lab.

All this stuff is sufficient to develop and manage complex web apps right in browser. The 10-minutes screencast below demonstrates the entire stack. Screencast at YouTube.

Couchapps and data-driven design

I have docs of 4 data types and 4 apps to manage them. Each app lives in a separate design document.

Couchapps are good if your design is small, flexible and data-driven. Specifically, if it‘s doc-driven.

In cases when you can organize your data into typed and tagged atomic documents, CouchDB perfectly embraces both datasets and apps for processing/visualizing datasets. Applications literally follow the data, they are stored in the same CouchDB/PouchDB bucket, near the data docs.

Offline-ready couchapp concept is framework-agnostic in a way that you are free to use any of client-side app frameworks.

A good rule of thumb: one design document for one app, one ddoc for shared API, and one for login. Effect of placing JS functions in different design documents is especially visible at quad core and more powerful machines. All JS functions of a design doc, roughly, share one JS VM instance, which is single-threaded process. So more ddocs = better CPU cores utilization.

Performance

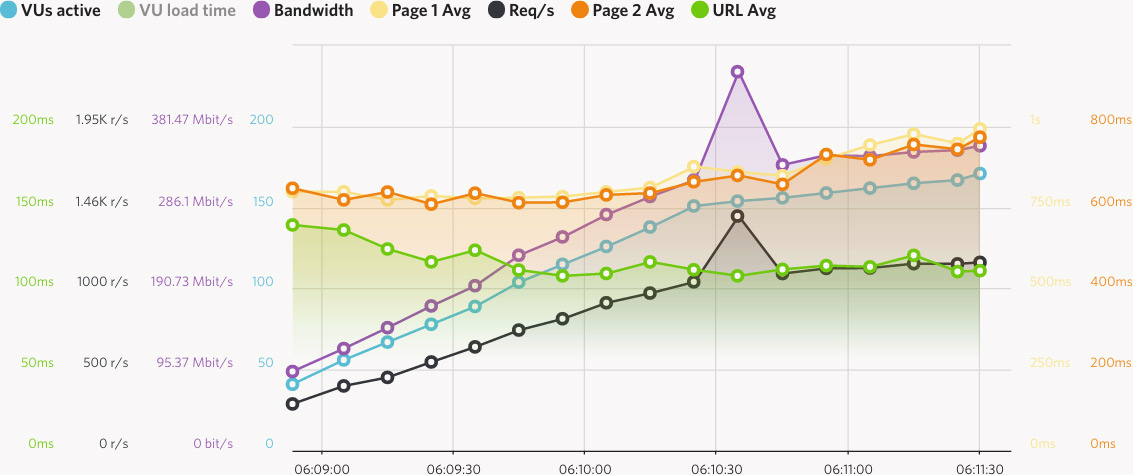

Overall performance is modest. I‘ve performed some tests using Load Impact. The latest consisted of 10–20 virtual users (VUs), who surfed cycling through 6 public pages, staying for only 2–4 seconds on each page. Each user fetched 126 different URLs, both static files and dynamically generated HTML and json.

The screenshots of the test are below. The right one specifically shows how long it takes to receive the landing page HTML. The route is Dublin–Frankfurt. The machine is 2×1.7GHz, 2Gb RAM with SSD.

In short, you can have at most ~40rps/core max, while using JS rewrite in 90% cases and view/list in 15% cases. We assume here, that the data is updated rearly.

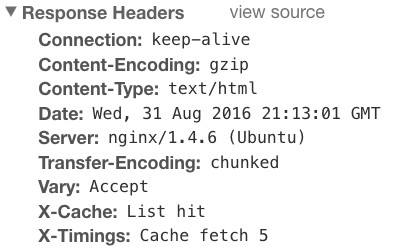

* Lesorub.pro headers. X-Timing shows we had a cache hit inside list function and it saved ~70ms skipping getRow call and render

Actually, the performance is even worse, I was cheating. If I honestly built the pages on each request (renderer is 100Kb+ of JS code), I‘d have ~½ capacity and +50…70ms latency. That‘s why the results of the list functions are memoized using a dirty hack, see headers screenshot* aside.

But... the final result is not so bad. I have average page visit time 1’20” for this site, so it can handle about 15×(80/3) = 400 readers simultaneously. This is much more than we expected or have.

node.js comparison

It‘s good to compare couchapps performance with something similar, but running not in a couchapp environment. Happily, I have several projects, running on node.js/CouchDB CMS. This solution employs somehow couchapp-like architecture (a lot of JS code stored in a DB), so comparison isn‘t unfair.

About 10× more performant Node.js + CouchDB CMS on 4×2GHz, 4Gb RAM

If I compare with previous graphs, I clearly see here ~5× increase of capacity, rps and bandwidth per CPU core.

Node.js solutions also allow significantly more flexible architectures and are not limited by the old ES5 CouchDB JS engine. Although, once started, node.js-based systems are in general less on-the-fly configurable.

Conclusion

Well, couchapps concept, being boosted with new JS rewrites and PouchDB, is still alive and useful in rare cases. However, building couchapps require special tooling, deep CouchDB understanding and experience.

Although, if you have all three, you can create complex situational solutions in days, sometimes in hours, federate them and adapt as your projects evolve.

ermouth

2016-09-01